Build MegaService of FAQ Generation on Gaudi¶

This document outlines the deployment process for a FAQ Generation application utilizing the GenAIComps microservice pipeline on Intel Gaudi server. The steps include Docker image creation, container deployment via Docker Compose, and service execution to integrate microservices such as llm. We will publish the Docker images to Docker Hub, which will simplify the deployment process for this service.

Quick Start:¶

Set up the environment variables.

Run Docker Compose.

Consume the ChatQnA Service.

Quick Start: 1.Setup Environment Variable¶

To set up environment variables for deploying ChatQnA services, follow these steps:

Set the required environment variables:

# Example: host_ip="192.168.1.1" export host_ip=$(hostname -I | awk '{print $1}') export HUGGINGFACEHUB_API_TOKEN="Your_Huggingface_API_Token"

If you are in a proxy environment, also set the proxy-related environment variables:

export http_proxy="Your_HTTP_Proxy" export https_proxy="Your_HTTPs_Proxy" # Example: no_proxy="localhost, 127.0.0.1,192.168.1.1" export no_proxy="localhost, 127.0.0.1,192.168.1.1, ${host_ip}"

Set up other environment variables:

export LLM_MODEL_ID="meta-llama/Meta-Llama-3-8B-Instruct" export TGI_LLM_ENDPOINT="http://${host_ip}:8008" export MEGA_SERVICE_HOST_IP=${host_ip} export LLM_SERVICE_HOST_IP=${host_ip} export LLM_SERVICE_PORT=9000 export BACKEND_SERVICE_ENDPOINT="http://${host_ip}:8888/v1/faqgen"

Quick Start: 2.Run Docker Compose¶

docker compose up -d

It will automatically download the docker image on docker hub, please check the images’ status by the commands

docker ps -a

docker logs tgi-gaudi-server -t

it may take some time to download the model. In following cases, you could build docker image from source by yourself.

Failed to download the docker image.

If you want to use a specific version of Docker image.

Please refer to ‘Build Docker Images’ in below.

QuickStart: 3.Consume the Service¶

curl localhost:8008/generate \

-X POST \

-d '{"inputs":"What is Deep Learning?","parameters":{"max_new_tokens":64, "do_sample": true}}' \

-H 'Content-Type: application/json'

here we just test the service on the host machine for a quick start. If all networks work fine, please try

curl http://${host_ip}:8008/generate \

-X POST \

-d '{"inputs":"What is Deep Learning?","parameters":{"max_new_tokens":64, "do_sample": true}}' \

-H 'Content-Type: application/json'

🚀 Build Docker Images¶

First of all, you need to build Docker Images locally. This step can be ignored once the Docker images are published to Docker hub.

1. Pull TGI Gaudi Image¶

As TGI Gaudi has been officially published as a Docker image, we simply need to pull it:

docker pull ghcr.io/huggingface/tgi-gaudi:2.0.6

2. Build LLM Image¶

git clone https://github.com/opea-project/GenAIComps.git

cd GenAIComps

docker build -t opea/llm-faqgen:latest --build-arg https_proxy=$https_proxy --build-arg http_proxy=$http_proxy -f comps/llms/src/faq-generation/Dockerfile .

3. Build MegaService Docker Image¶

To construct the Mega Service, we utilize the GenAIComps microservice pipeline within the faqgen.py Python script. Build the MegaService Docker image using the command below:

git clone https://github.com/opea-project/GenAIExamples

cd GenAIExamples/FaqGen/

docker build --no-cache -t opea/faqgen:latest --build-arg https_proxy=$https_proxy --build-arg http_proxy=$http_proxy -f Dockerfile .

4. Build UI Docker Image¶

Construct the frontend Docker image using the command below:

cd GenAIExamples/FaqGen/ui

docker build -t opea/faqgen-ui:latest --build-arg https_proxy=$https_proxy --build-arg http_proxy=$http_proxy -f ./docker/Dockerfile .

5. Build react UI Docker Image (Optional)¶

Build the frontend Docker image based on react framework via below command:

cd GenAIExamples/FaqGen/ui

export BACKEND_SERVICE_ENDPOINT="http://${host_ip}:8888/v1/faqgen"

docker build -t opea/faqgen-react-ui:latest --build-arg https_proxy=$https_proxy --build-arg http_proxy=$http_proxy --build-arg BACKEND_SERVICE_ENDPOINT=$BACKEND_SERVICE_ENDPOINT -f docker/Dockerfile.react .

Then run the command docker images, you will have the following Docker Images:

ghcr.io/huggingface/tgi-gaudi:2.0.6opea/llm-faqgen:latestopea/faqgen:latestopea/faqgen-ui:latestopea/faqgen-react-ui:latest

🚀 Start Microservices and MegaService¶

Required Models¶

We set default model as “meta-llama/Meta-Llama-3-8B-Instruct”, change “LLM_MODEL_ID” in following Environment Variables setting if you want to use other models.

If use gated models, you also need to provide huggingface token to “HUGGINGFACEHUB_API_TOKEN” environment variable.

Setup Environment Variables¶

Since the compose.yaml will consume some environment variables, you need to setup them in advance as below.

export no_proxy=${your_no_proxy}

export http_proxy=${your_http_proxy}

export https_proxy=${your_http_proxy}

export host_ip=${your_host_ip}

export LLM_ENDPOINT_PORT=8008

export LLM_SERVICE_PORT=9000

export FAQGen_COMPONENT_NAME="OpeaFaqGenTgi"

export LLM_MODEL_ID="meta-llama/Meta-Llama-3-8B-Instruct"

export HUGGINGFACEHUB_API_TOKEN=${your_hf_api_token}

export MEGA_SERVICE_HOST_IP=${host_ip}

export LLM_SERVICE_HOST_IP=${host_ip}

export LLM_ENDPOINT="http://${host_ip}:${LLM_ENDPOINT_PORT}"

export BACKEND_SERVICE_ENDPOINT="http://${host_ip}:8888/v1/faqgen"

Note: Please replace with host_ip with your external IP address, do not use localhost.

Start Microservice Docker Containers¶

cd GenAIExamples/FaqGen/docker_compose/intel/hpu/gaudi

docker compose up -d

Validate Microservices¶

TGI Service

curl http://${host_ip}:8008/generate \ -X POST \ -d '{"inputs":"What is Deep Learning?","parameters":{"max_new_tokens":64, "do_sample": true}}' \ -H 'Content-Type: application/json'

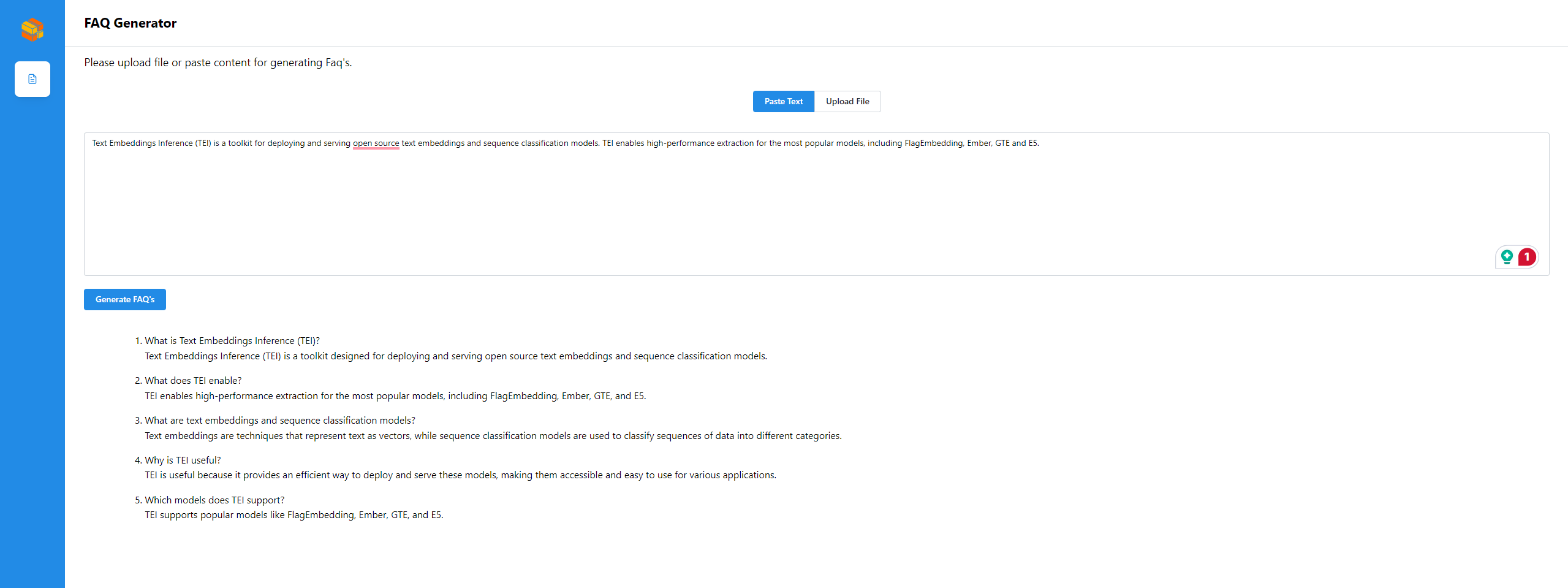

LLM Microservice

curl http://${host_ip}:9000/v1/faqgen \ -X POST \ -d '{"query":"Text Embeddings Inference (TEI) is a toolkit for deploying and serving open source text embeddings and sequence classification models. TEI enables high-performance extraction for the most popular models, including FlagEmbedding, Ember, GTE and E5."}' \ -H 'Content-Type: application/json'

MegaService

curl http://${host_ip}:8888/v1/faqgen \ -H "Content-Type: multipart/form-data" \ -F "messages=Text Embeddings Inference (TEI) is a toolkit for deploying and serving open source text embeddings and sequence classification models. TEI enables high-performance extraction for the most popular models, including FlagEmbedding, Ember, GTE and E5." \ -F "max_tokens=32" \ -F "stream=False"

##enable stream curl http://${host_ip}:8888/v1/faqgen \ -H "Content-Type: multipart/form-data" \ -F "messages=Text Embeddings Inference (TEI) is a toolkit for deploying and serving open source text embeddings and sequence classification models. TEI enables high-performance extraction for the most popular models, including FlagEmbedding, Ember, GTE and E5." \ -F "max_tokens=32" \ -F "stream=True"

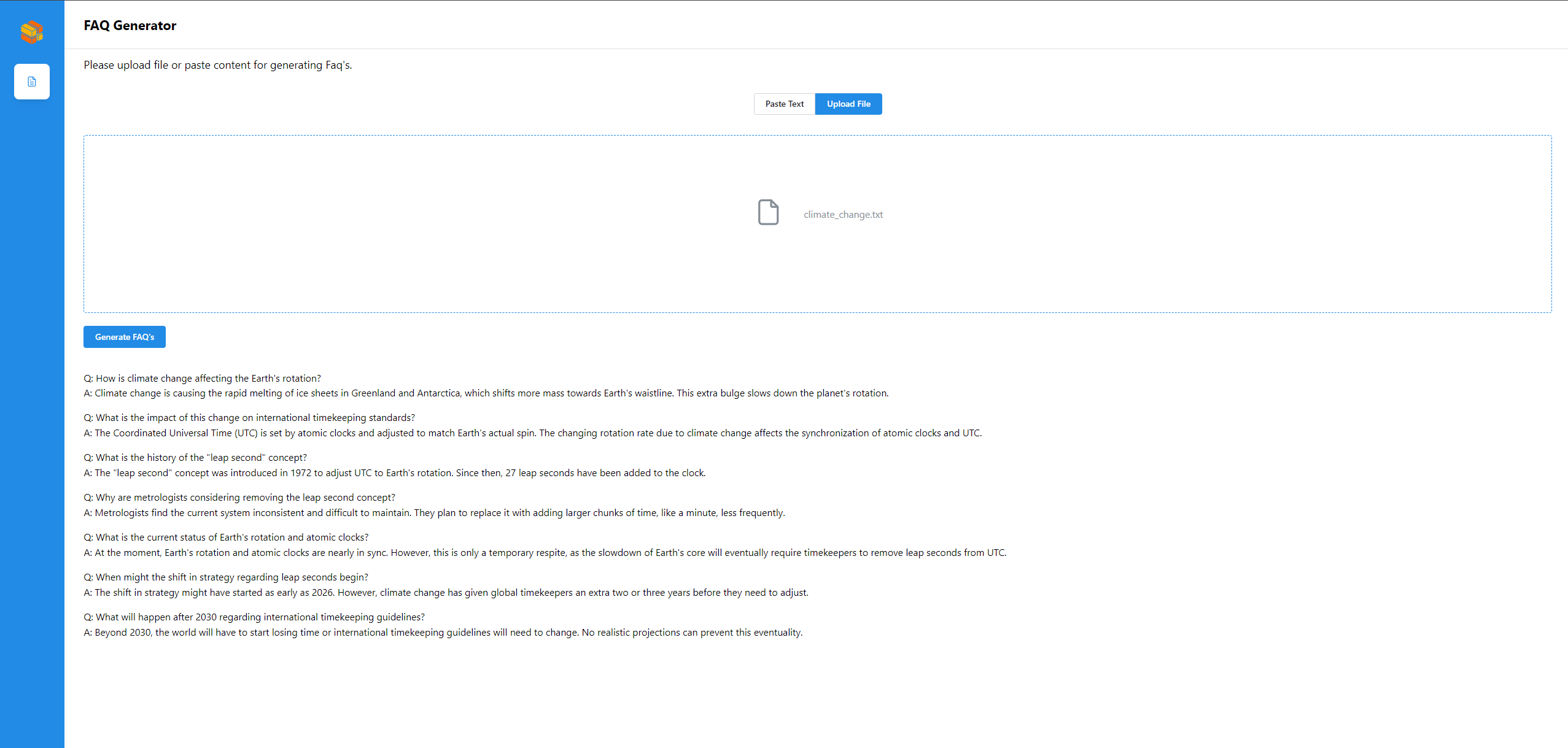

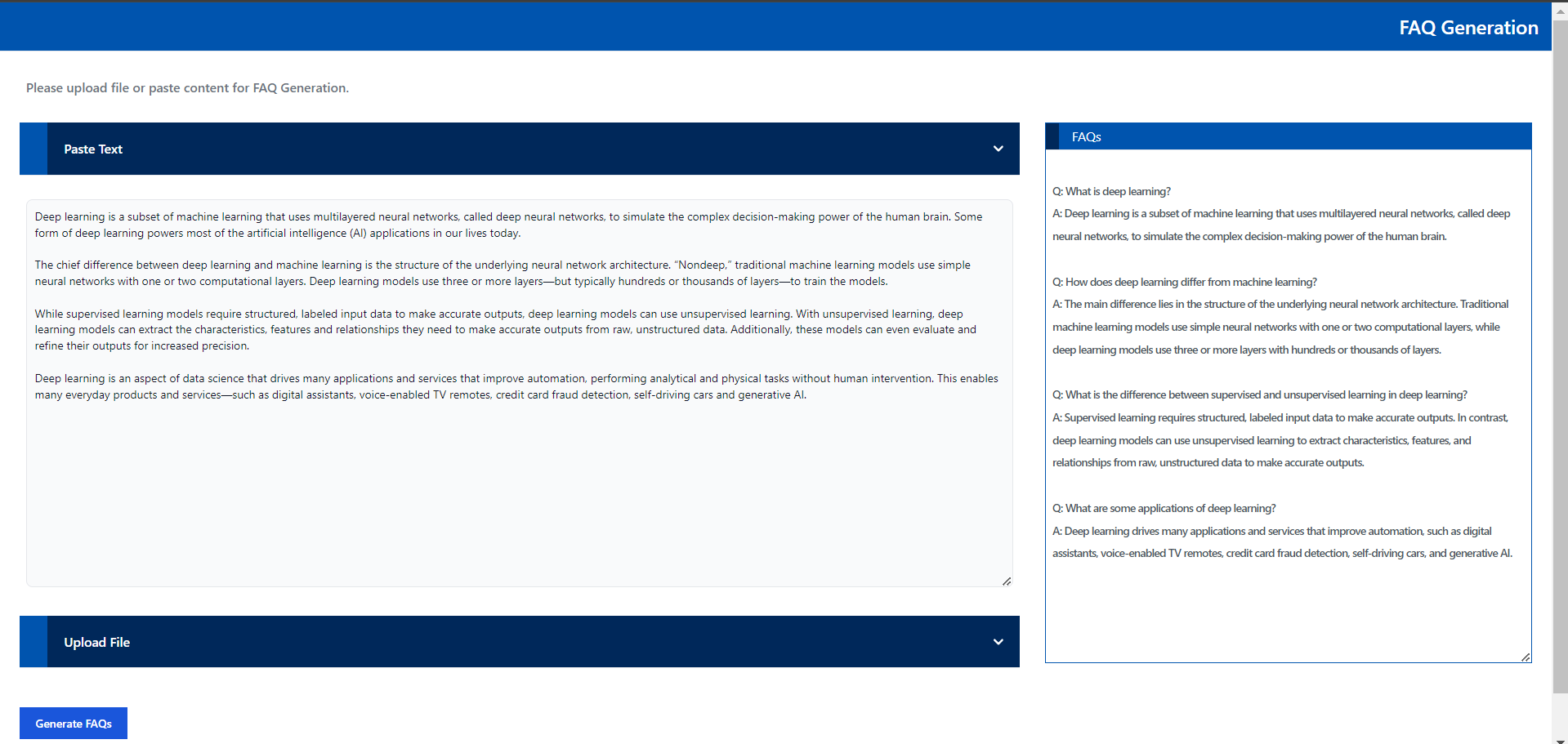

🚀 Launch the UI¶

Open this URL http://{host_ip}:5173 in your browser to access the frontend.

🚀 Launch the React UI (Optional)¶

To access the FAQGen (react based) frontend, modify the UI service in the compose.yaml file. Replace faqgen-xeon-ui-server service with the faqgen-xeon-react-ui-server service as per the config below:

faqgen-xeon-react-ui-server:

image: opea/faqgen-react-ui:latest

container_name: faqgen-xeon-react-ui-server

environment:

- no_proxy=${no_proxy}

- https_proxy=${https_proxy}

- http_proxy=${http_proxy}

ports:

- 5174:80

depends_on:

- faqgen-xeon-backend-server

ipc: host

restart: always

Open this URL http://{host_ip}:5174 in your browser to access the react based frontend.

Create FAQs from Text input

Create FAQs from Text Files